I did not want Quizco's AI agent to be a one-shot prompt that blindly spits out trivia.

I wanted it to act more like a product teammate:

- look at what people are already playing

- decide whether new quizzes are even needed

- generate drafts in a strict structure

- verify and de-duplicate them

- hand the final decision to a human reviewer

That led me to an architecture that is much closer to an agent loop than a simple "generate quiz" button.

I also wanted the post to have a single systems-view image, so I made this architecture diagram for the agent loop:

This is a single Node.js backend service architecture; planner, writer, and verifier are internal modules inside the same backend.

Workflow

From platform signals to a published quiz

Every agent run starts by reading product signals and ends with a human approval decision.

- 01

Read platform demand and queue pressure

Before generating anything, the backend inspects active quiz tags, attempt counts, recent agent history, pending quiz load, and durable memory from earlier runs.

This lets the agent react to real demand instead of inventing random topics in isolation.

- 02

Choose whether to generate at all

The planner uses the OpenAI Responses API, can call internal tools, and can use web search when freshness matters. It is allowed to stand down if demand is weak or the queue is already full.

A run can return generate_quizzes, stand_down, or observe instead of forcing content every time.

- 03

Generate strict JSON quiz drafts

The writer model produces exactly 5 questions, 4 answer options per question, a confidence score, a format, a topic, and a trend summary. The writer can be OpenAI or Anthropic depending on config.

The prompt also tells the model to avoid reusing recent titles, angles, or question concepts.

- 04

Validate safety, structure, and factual quality

Generated drafts are checked for unsafe topics, weak structure, duplicate questions, missing citations for web-backed ideas, and factual issues through a verifier that can search the web question by question.

If a draft fails, the agent revises it and tries again up to the configured revision limit instead of publishing low-quality output.

- 05

Block near-duplicate quizzes with embeddings

Once a draft passes validation, I create an embedding and compare it against existing quizzes with MongoDB vector search so the agent does not keep publishing the same idea in a new wrapper.

The duplicate threshold is explicit, so repetition is treated like a product bug rather than a model quirk.

- 06

Store the result in a review buffer

Passing drafts are written to QuizPending with planner notes, source citations, verification reports, confidence, and revision metadata attached.

I wanted every pending quiz to carry enough context for a reviewer to understand why it exists.

- 07

Approve to publish, reject to teach the system

The React admin dashboard lets me approve or reject pending quizzes. Approval creates a real Quiz plus its Question documents. Rejection saves a reason that becomes future memory for the planner.

Nothing is auto-published. Human review is the final gate.

Planning before generation

The most important design decision was making planning a first-class step.

Instead of asking a model to directly invent quizzes, I built a planner that can inspect the state of the platform first. That planner gets a small tool belt and can decide that the correct answer is to do nothing.

get_platform_state get_recent_agent_history get_agent_memory web_search

That mattered for two reasons.

First, the agent needs to respect product reality. If there are already too many pending quizzes, or if a topic was just rejected, generating more of the same thing is noise.

Second, freshness is uneven. Some topics can be chosen from internal demand alone, while others benefit from current web context. The planner only reaches for web search when that freshness actually improves the decision.

I also added a deterministic fallback ranker. If the planner call fails, Quizco can still choose topics based on attempts, active quiz counts, and penalties for recently pending, rejected, or already-approved topics. That keeps the system resilient instead of brittle.

Generation is only one stage of the pipeline

Once the planner selects topics, the writer prompt asks for a strict quiz JSON object, not free-form prose.

The draft must include:

- a title and description

- a topic and tags

- a format such as

standard,speed_round,deep_dive, orstreak - an

agentConfidencescore - exactly 5 questions

- exactly 4 options per question

After that, the draft goes through multiple filters:

- safety validation blocks harmful or tragedy-heavy topics

- structure validation catches broken question counts, duplicate prompts, or invalid options

- citation validation ensures web-influenced drafts still carry sources

- the verifier uses web search to fact-check questions that contain factual claims

- the embedding check blocks drafts that are too close to existing quizzes

This was the difference between "AI content" and "reviewable product content." I wanted the agent to earn its way into the inbox.

Human review was non-negotiable

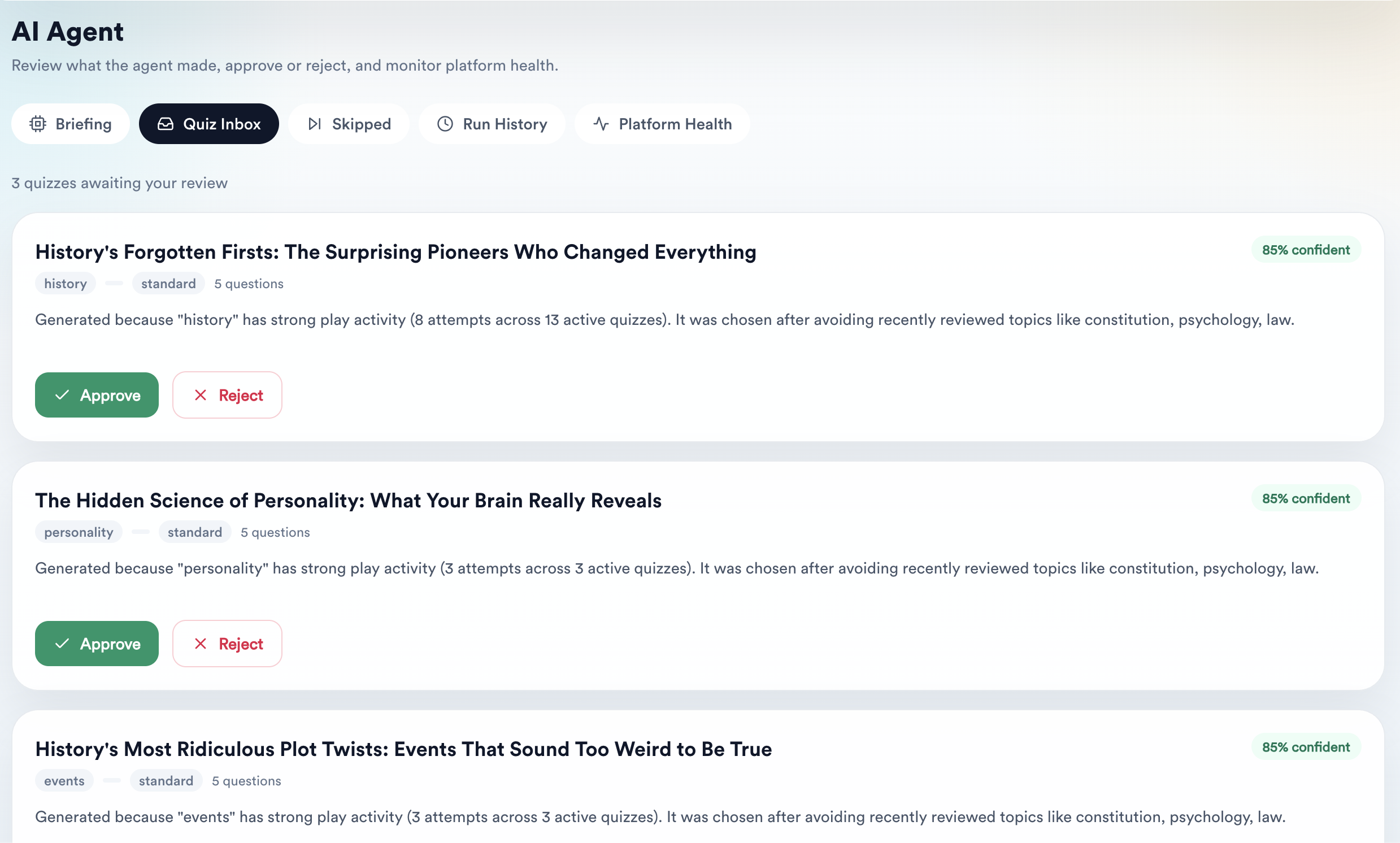

I built a dedicated React admin surface at /agent instead of hiding the workflow behind logs.

The dashboard is split into a few focused views:

Briefingsummarizes the latest run and current agent stateQuiz Inboxshows pending quizzes with confidence, topic context, and approve/reject actionsSkippedrecords why the agent chose not to generate certain candidatesRun Historyexposes recent runs for auditabilityPlatform Healthshows preflight status, last run info, and run controls

That dashboard turns the agent from a black box into an inspectable system. If a quiz is rejected, the rejection reason is saved. If a quiz is approved, it is converted into a real Quiz and its Question records.

Workflow

The learning loop after each run

The agent gets better because reviews and quiz performance are written back into memory, not because the model magically remembers.

- 01

Approval and rejection outcomes are captured explicitly

Each pending quiz ends up as approved, rejected, or still pending, and rejected quizzes carry a human-written reason.

This gives the system concrete product feedback instead of vague intuition.

- 02

Agent memory is rebuilt from recent runs and review outcomes

After each run and after each review action, I refresh a singleton memory document that summarizes topic performance, review insights, and recent run summaries.

The memory stores both what the agent tried and how humans responded to it.

- 03

Published agent quizzes create new attempt signals

Once an approved quiz is live, players interact with it. Those attempts become the next round of topic demand and performance data.

This closes the loop between generated content and real user behavior.

- 04

The planner reads that memory before choosing again

On the next cycle, the planner sees recent approvals, rejections, pending load, and topic performance before selecting new angles.

That is what makes the system iterative instead of stateless.

Why the data model mattered

I split the system into a few purpose-built collections so the pipeline stays auditable.

| Collection | Why it exists | What it stores |

|---|---|---|

AgentRun | Run-level observability | trigger, duration, planner action, topics selected, citations, skips, errors |

AgentMemory | Durable learning between runs | topic performance, review insights, recent runs |

QuizPending | Human review buffer | generated draft, citations, verification report, confidence, review status |

Quiz + Question | Published learning content | approved quizzes and their questions |

Recommendation | Recommendation surface for players | recommendation metadata and expiry windows |

That last piece is worth calling out honestly: I already laid down the Recommendation model and the toast-based recommendation UI in the frontend, but the current runAgentCycle is still focused on generating and reviewing quizzes rather than automatically dispatching recommendations. The surface is there; the publishing loop came first.

What I would improve next

If I keep pushing Quizco's agent further, the next upgrades are pretty clear.

- automatic recommendation dispatch so approved agent content can reach the right users without manual glue

- richer health analytics so the dashboard matches the ambition of the backend pipeline

- stronger reviewer tooling around why a draft passed validation and which sources were used to verify it

The biggest takeaway from building this was simple: the useful part of an AI agent is not the model call. It is the loop around the model call.

Planning, guardrails, validation, memory, and review are what made Quizco's agent feel dependable enough to ship.